Introduction

Amazon S3 is a cloud service for setting up drives on the cloud, meaning that we can use it for storing and sharing files on the cloud. Compared to the Cloud drives that we commonly see and use every day, such as Dropbox, Google Drive, Proton Drive, Box, and among others to name, the Amazon S3 service is more like a backend thing for providing the underlying remote drive service. Based on such a backend engine, there exist wrapper apps to provide frontend interface for users to get access to the S3 cloud drive in an easy way.

Though, AWS does provide frontend interface with which we can check, upload and download files, it is for sure not for daily use as the access to the AWS S3 frontend interface is not as straightforward as those daily used ones.

Typically, one has two main types of options to use the S3 cloud drive. One main way is to mount

the S3 drive locally using routines like s3fs and a few other options as mentioned in Ref. [1].

Another typical way of using S3 drive is to use it as the storage service for some web drive apps.

In my case, I have a VPS (transfer to here and here for more about my VPS set up with Oracle) on which I have web-based

file servers SFTPGo [2], FileCodeBox [3] and KOD Cloud [4]. These web-based file servers

provide the capability to use the S3 drive as the storage.

In this tutorial, I will be covering the procedure to set up the AWS S3 bucket (the terminology that Amazon uses for their storage service). Also, I will put down some notes concerning the issues that I randomly came across when playing around with S3 service initially as a beginner.

Setup

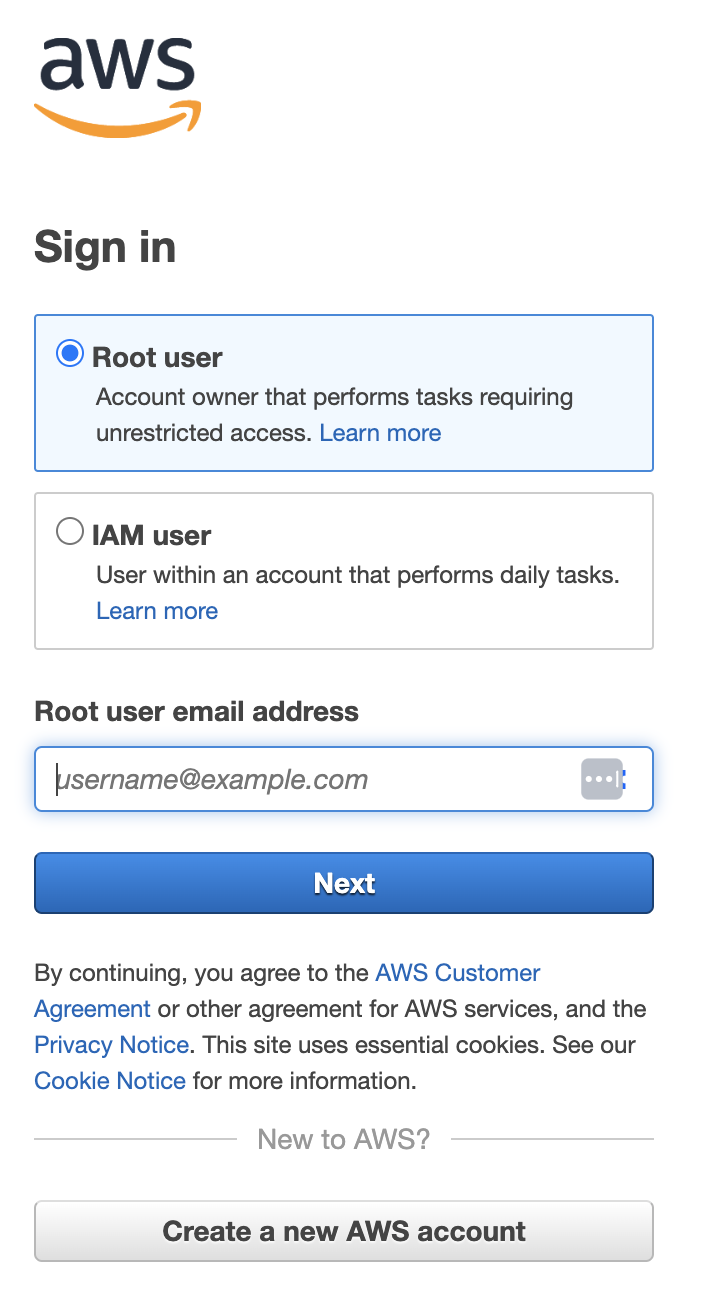

First, we can go to https://aws.amazon.com/s3/ where we can log in , if we have already got an account, or register one otherwise. The sign-in screen will look like this,

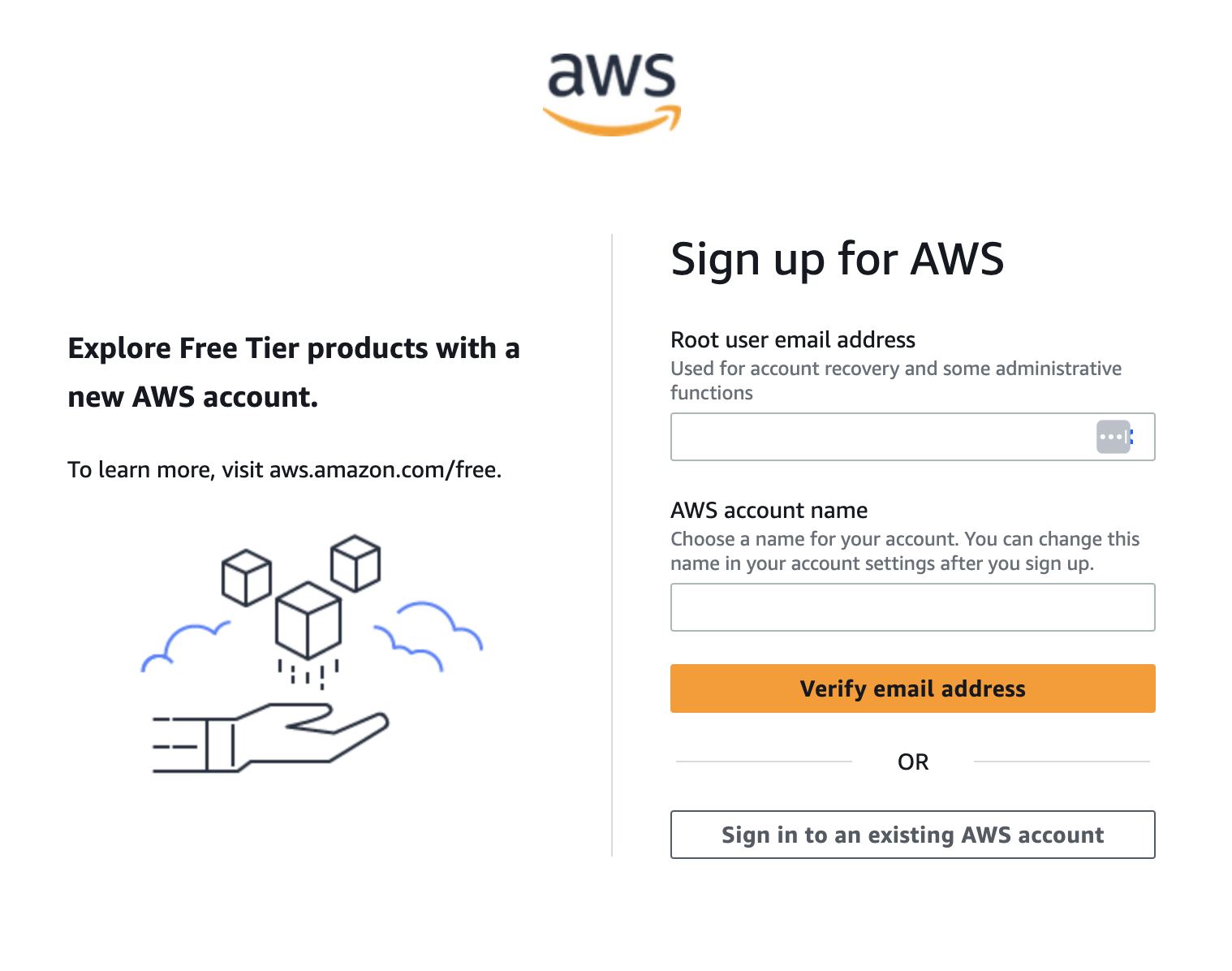

and the register screen will be like this,

Here, we can notice that in both the log-in and register screen, we have the definition of a root

user. This is just like whatever other normal user account we created anywhere else – the reason

for calling this root user is because, later on, when logging into the AWS cloud service system,

we will have the capability, as the root user, to create other users inside the AWS service (

namely, the IAM, short for Identity Access Management). Amazon provides different entry point

for these two different types of user account and that is why we see two options in the log-in

screen.

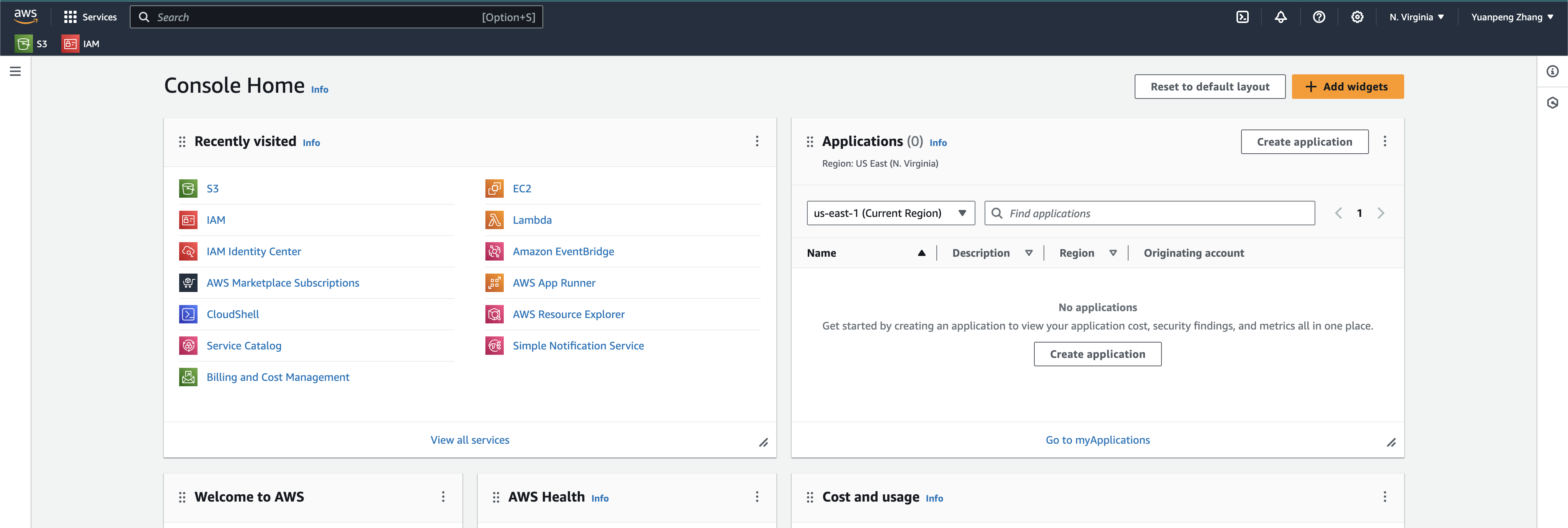

Once we have an account – suppose we are talking about the root account here – we can then log

into the AWS control panel. After successful login, we will see screen like follows,

In the main control panel as shown, we can go to the search bar on the top-left to search for

S3 and we can then go to the S3 control panel, where we can set up the so-called bucket.

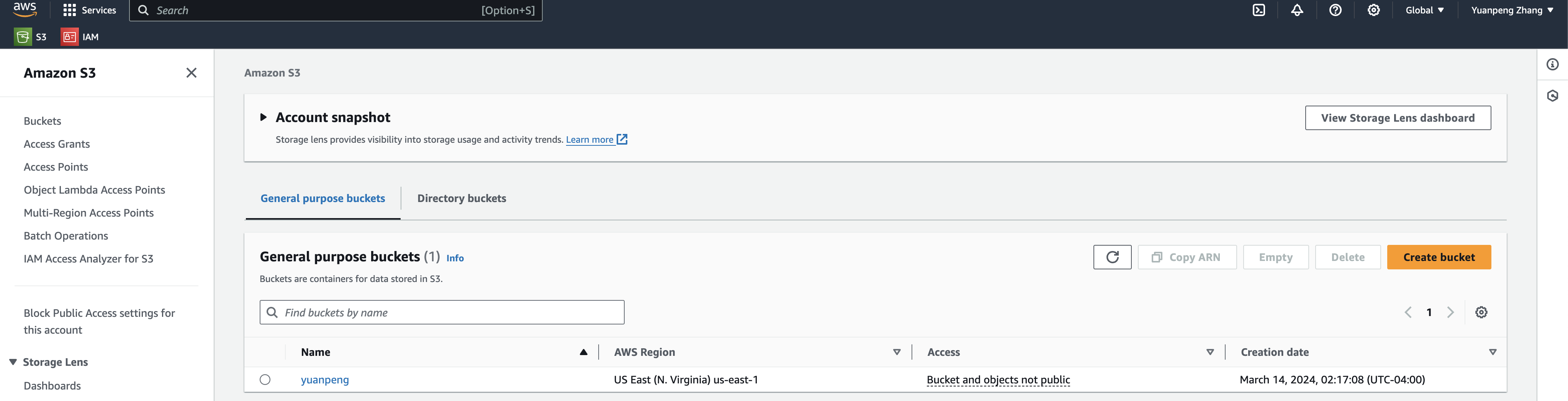

Basically, this is just the terminology used by AWS for storage. We can set up multiple buckets

for different uses and they are independent from each other. As shown below, I have set up only

one bucket,

The charging for the S3 storage service is not like what we usually have for normal cloud drive

services (the reason is also obvious – as mentioned above, S3 is more like a backend service) –

it is based on the usage of the storage. Basically, we put files (S3 calls them objects) in the

bucket and S3 will calculate the cost based on the size of the file and how long we have stored

the file for, during the month. For sure, there are other factors in calculating the charge rate but

still the basic idea stays. For more details, refer to Ref. [5].

Tips & Notes

-

Here is the way to obtain the ID and alias for a user,

IAMdashboard =>AWS Account=> show the ID and alias (user name)The

rootuser can also has its alias -

To set up the access key, which includes an ID and key secret,

IAMdashboard => Quick Links => My security credentials => access key setup -

To set up the MFA (Multiple Factor Authentication),

IAMdashboard => Quick Links => My security credentials => Multi-factor authentication (MFA) -

The

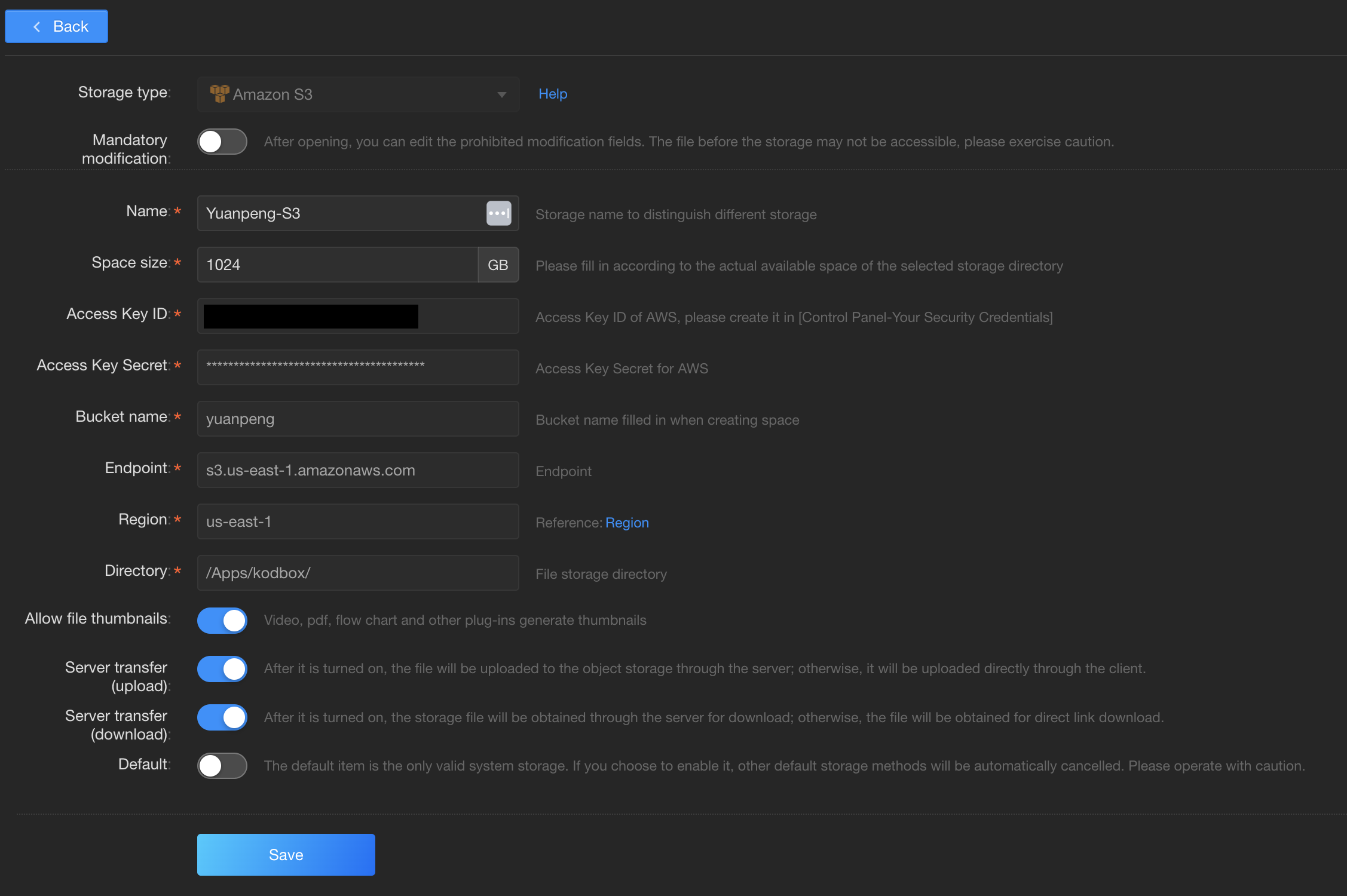

Access Key IDandAccess Key Secretare commonly used in cloud drive configurations to connect to our S3 bucket.Here is the example configuration for

KOD cloud,

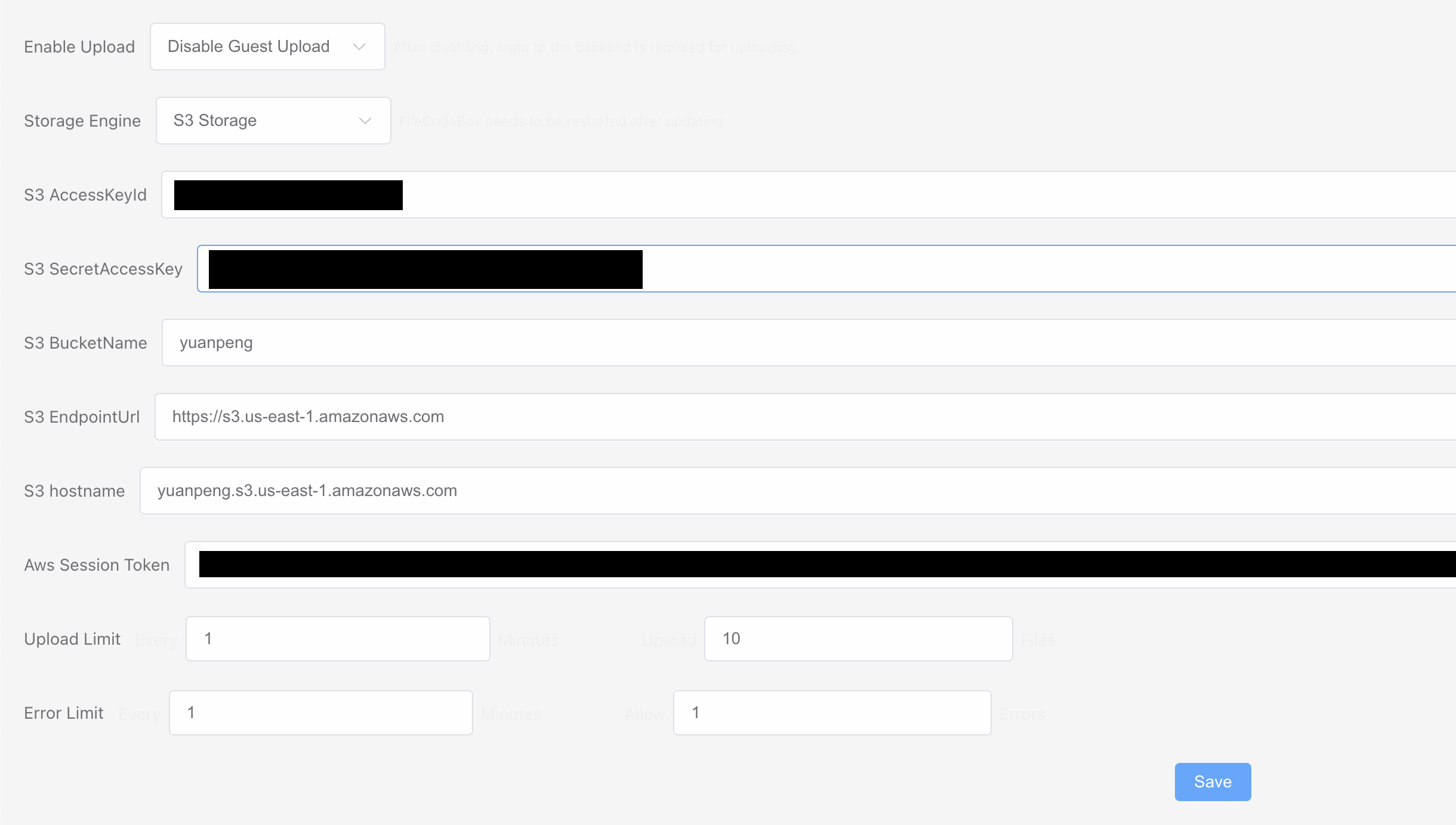

Here is the example configuration for

FileCodeBox,

For the

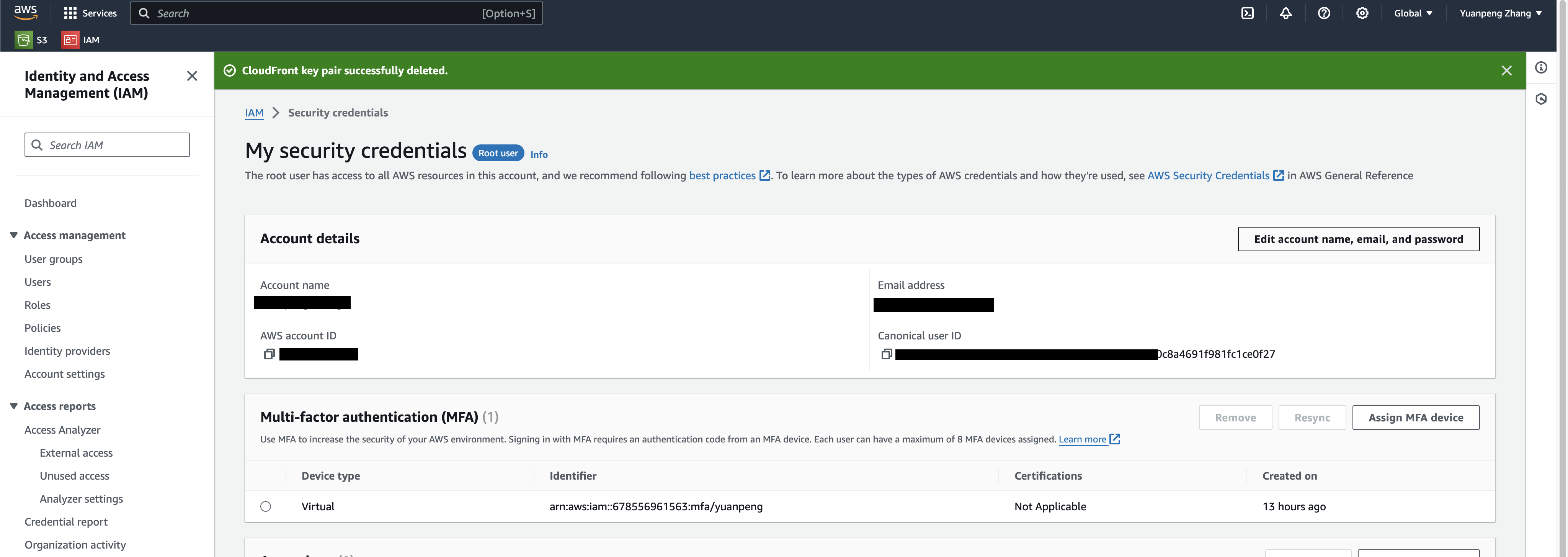

FileCodeBoxconfiguration, we are also required to have the access token as shown in the picture above. To obtain the token, we need to first install the AWS CLI tool, following the instruction in Ref. [6]. Then we can run the following commands,aws configure aws sts get-session-token --duration-seconds 900 --serial-number "arn:aws:iam::678556961563:mfa/yuanpeng" --token-code 846532The

serial-numbercan be obtained as shown in the picture below,

in which we are looking for the

Identifier. Thetoken-coderefers to the MFA token.Sometimes our access key and secret may have been updated since last time of generation of the token, in which case we need to run aws confiure again to fill in the new key and secret. [7]

-

Endpoint => refer to Ref. [8] for details about the endpoint.

-

About

hostname, we can refer to the configuration forFileCodeBoxabove – the first part of the URL is the bucket name and we can figure out the hostname to fill in according to the endpoint and our bucket, by following the same format as shown in the picture above. -

To mount the S3 drive locally, we have multiple options.

s3f3-fuse[9, 10] is probably one of the options, but it is usually slow [11]. On Windows, we have options likeRClone,S3Drive, etc. [11]. To use thes3fe-fuseoption, we can use the following command,s3fs yuanpeng ams_yuanpeng -o passwd_file=${HOME}/.passwd-s3fs -o allow_other -o umask=000where

.passwd-s3fsis our password file containing theAccess Key IDand theAccess Key Secret, following the following format,Access Key ID:Access Key Secret.yuanpenghere refers to the bucket name andams_yuanpengrefers to an empty local directory to mount the S3 drive to. -

Here are some useful links about the permission policy settings in S3 – Refs. [12-15].

References

[1] https://www.reddit.com/r/aws/comments/o7xffs/s3_bucket_mount_to_windows/?rdt=51783

[3] https://github.com/vastsa/FileCodeBox

[4] https://github.com/Handsomedoggy/KodExplorer

[5] https://aws.amazon.com/s3/pricing/

[6] https://docs.aws.amazon.com/cli/latest/userguide/getting-started-install.html

[8] https://docs.aws.amazon.com/general/latest/gr/s3.html

[11] https://www.reddit.com/r/aws/comments/o7xffs/s3_bucket_mount_to_windows/

[12] https://docs.aws.amazon.com/config/latest/developerguide/s3-bucket-policy.html

[14] https://repost.aws/knowledge-center/s3-static-website-endpoint-error